Tangram Tangle

If you split the square into these two pieces, it is possible to fit the pieces together again to make a new shape. How many new shapes can you make?

Problem

The square below has been cut into two pieces:

It is possible to fit the pieces together again to make a new shape.

If you must match whole sides to each other so that the corners meet, how many new shapes can you make?

Watch out for shapes which are really the same but just turned round or flipped over.

You could try out your ideas using the interactivity below, or download this sheet which contains four copies of the square.

Getting Started

Cut out the square and the two pieces and try to make some shapes. (You could print off this sheet which contains four copies of the square.)

How will you record your findings?

How do you know you have found all the shapes?

Student Solutions

Thank you to everybody who sent us their ideas about this activity. We were sent lots of different solutions, but only a few children found all the possible ways of making a new shape.

We received a couple of solutions from the children at British School Al Khubairat in the UAE. Rayya and Emily sent in this explanation and picture:

The instructions said that the corners have to match up, that was the only rule. We decided that we can flip it and rotate it; if we weren't allowed to flip it then there would only be 4 solutions/new shapes.

This is interesting, as we weren't clear about what you were allowed to do with each part of the tangram. If we're saying that reflecting the shapes isn't allowed, then I think there are only four possible solutions including the starting shape. I think the part in the rules that says about not being able to make turned around or flipped shapes is talking about the final shape - so, for example, solutions 4 and 6 in Rayya and Emily's picture above are actually the same solution, because they are reflections of each other.

Lucas and Gregory from Coppice Primary School in England described one of the shapes they made:

It can make a parallelogram.

I think they are talking about Solution 3 in Rayya and Emily's picture above. We had several solutions saying that they thought this was a rhombus, but Lucas and Gregory are correct. Can you see why it is a parallelogram and not a rhombus?

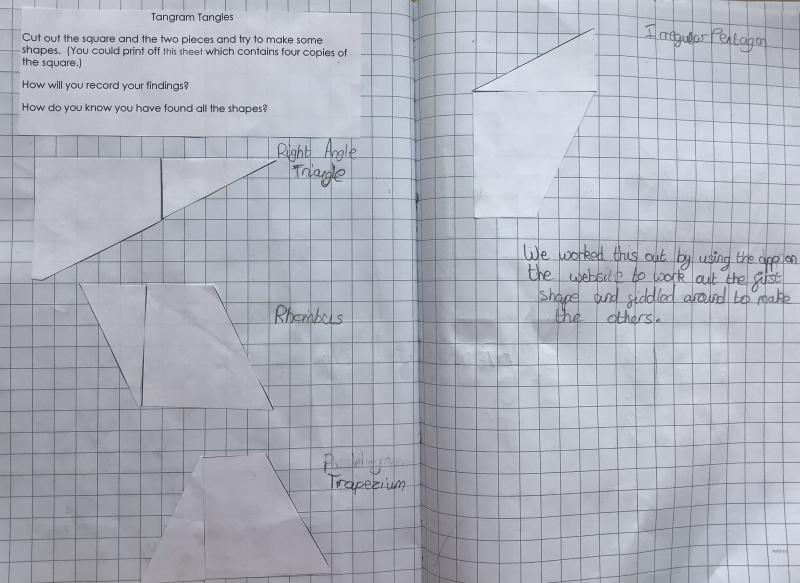

Grace and Erin from Alexandra Junior School in England shared their method with us:

To work this out we took the larger half of the square and rotated it around to the left.

Then we got the second half of the square and fitted it with the first half. We ended up with a right angle triangle.

Next we rotated the larger half the other way and put the other half next to it and realised that we had made a rhombus.

After that we did the same thing with the next squares and came up with a trapezium and a irregular pentagon.

Good ideas! I like the way you started with one arrangement and then changed it slightly to find another arrangement. I wonder if there's a way of doing this more systematically to find all the possible solutions?

Chloe from Eastcourt Independent School in England sent in this explanation:

Here are some of the ways you can make some new shapes. You can turn the trapezoid 90° and leave the triangle the same way, you will get a 4 sided shape.

Another way you can do this is to rotate the trapezoid around and put the triangle on the top of the trapezoid and you get a large right angle triangle.

You can also rotate the triangle 90° and keep the trapezoid the same way, then put the triangle at the base of the trapezoid and you’ll get a new shape.

These are some of the shapes that I have found, but there must be more shapes please tell me if you find any more.

Thank you for sending in these thoughts, Chloe. 'Trapezoid' is another word for 'trapezium', and Chloe is right - when the square was cut into two pieces at the beginning, we ended up with a triangle and a trapezium. Chloe is also right that there are more shapes that can be found, and some of these are difficult to name!

Bertie from Epsom Primary School in England had a more systematic approach, and managed to find every possible solution:

I made shapes by matching the triangle to each side of the larger shape. I matched the different sides in length. Each side of the larger shape can only match to one side of the triangle as the triangle has 3 different sides (it is a scalene triangle).

This made 4 different shapes:

- a square (which we started with)

- a parallelogram (I wasn't sure at first if this was a rhombus, but the top and bottom are the sides of the square and the left and right sides are the hypotenuse of the triangle which must be longer)

- a scalene triangle

- an irregular quadrilateral

I then realised I could make more shapes if I flip the triangle over. I then did the same thing, which gave the following shapes:

- an irregular pentagon

- a different irregular pentagon

- another different irregular pentagon

- a trapezium

Bertie also thought about why there were so many irregular pentagons in the right-hand column of their shapes:

To finish, I thought about why flipping the triangle gives irregular pentagons when before I only had 3 or 4 sided shapes. This is because there are 7 total sides between the 2 shapes. When I match two sides, those both disappear, but for all of the first shapes, two sides end up joining together, meaning I lose one more side and get 4 sided shapes (or, with the triangle, two sides join together TWICE).

You've thought really hard about this, Bertie, and these are excellent ideas. By keeping the larger shape fixed and only reflecting the triangle, you've found every possible solution - and, if we reflected the larger shape, we'd get eight more solutions which would all be reflections of your solutions, and so wouldn't be allowed. (The reflections of two of these would actually be identical to two of your solutions, as the shapes are symmetrical - can you see which ones those would be?)

Thank you as well to the following children, who also sent in similar solutions to this activity: Annie, Blue, Elliott, Aisha, Lucy, Billy, Elliot, Macy, Isabel, Izzy, Joshua, Ava, Abi and Kitty from Richmond Methodist Primary School in England; Ruel, Ellen, Kayhan, Ferdia, Lucinda, Maddy, Chloe, Georgia, Tom, Felix, Lucas and Oscar from Clifton Hill Primary School in Australia; Dhruv from The Glasgow Academy in the UK; Millie from Innsworth Infant School in the UK; Charlotte, Gemma, Zali, Elise and Olivia from All Hallows School in Australia; Jayden and Anna from Mountains Christian College in Australia; Ra from Australia; Ethadam from British School Al Khubairat in the UAE; and Klara from Success Academy in the USA.

Teachers' Resources

Why do this problem?

This activity invites discussion amongst pupils which will encourage them to use vocabulary associated with position and transformations.

You may like to read our Let's Get Flexible with Geometry article to find out more about developing learners' mathematical flexibility through geometry.

Possible approach

It is important for children to have paper or card copies of the shapes to work on the activity, or to have access to a tablet/computer in pairs so they can use the interactivity. This sheet contains four copies of the square or you could make your own on squared paper (the line dividing the square in two is drawn from one corner to the midpoint of the opposite side). It may be a good idea to use paper which is coloured on one side only and talk about whether the new shape should be the same colour all over.

You could introduce the task using the interactivity projected onto the board, or by inviting learners to gather round some large printed pieces. You could begin by showing them the square made of the two pieces and simply ask what they see. As there are no right/wrong answers to this question, it is an accessible entry point for all children. Use the comments that learners make to introduce the task itself.

Encouraging learners to be systematic in their discovery of 'new shapes' is important if they are going to be asked how they 'know' they have found every solution. Look out for those children who have developed a system and ask them to share their method with the whole group. For example, they might keep one shape fixed and find all the ways of placing the second shape. (There are many ways to be systematic, so try not to assume that everyone will use the same way as you!)

If it doesn't come up naturally, it might be helpful to ask the group how they are keeping track of the shapes they have found. If they are using the interactivity, a screenshot could be useful, or they could sketch the shapes they have created whether they are working on screen or using card. It might help some children to have many copies of the pieces so they can create each new shape and not have to re-use the pieces to find others.

Key questions

How will you record your findings?

How do you know you have found all the shapes?

Possible extension

Invite children to make another cut so that they have three pieces. How many new shapes can they make now? What cuts make the 'best' new set of shapes?

Possible support

Having several copies of the square will mean that children can stick down each arrangement.